Services

Transform Unstructured Text into Data for Data-driven decision making

The KAPS Group’s focus is helping enterprises utilize the full potential of text analytics.

To help with this process, the KAPS Group offers text analytics consulting services in four main areas:

Latest Services: Gen AI and Text Analytics – Strategy and Mini-POC

Getting Started: Webinars, workshops, mini strategy and mini-POC, knowledge audit, and software evaluation

Development: Building a text analytics foundation as well as adding text analytics to specific applications

Applications: Generating new applications that can transform your organization

Gen AI and Text Analytics

Two New Services for Gen AI and Text Analytics

Smart Start: Think Big, Start Small, and Scale Fast

Gen AI continues to astound in the public sphere, although the situation within the enterprise is quite different. And then there are those well-known flaws and issues:

- Hallucinations – making up facts

- Lack of transparency

- Amount and cost of data needed to build an LLM – and maintain it

- Quality of data for training

- Security – jailbreaks, leaks

- Bias

- Generic and overly simple generated text

- Inability to understand complex topics

- Mismatch of public training data and enterprise text

- And then there is the issue of actual business value beyond customer support chat

Amazingly there is one solution to all of these – text analytics! With its core capabilities of auto-categorization, data extraction, and sentiment analysis, combining text analytics and Gen AI is the key to solving all these issues.

However, establishing a text analytics capability or exploring Gen AI in your enterprise are both complex – and the combination is complex squared. The KAPS Group has developed two offerings that can get you started or take any current initiatives to the next level.

The first, Understanding Text Analytics and Gen AI in Your Organization, creates a strategic understanding how the integration of text analytics and Gen AI can be established in your organization and what value you can expect. The second, A Real Life Mini-POValue, creates a concrete demo that:

- Shows the power of the integration of text analytics and Gen AI

- Provides insight into the latest techniques

- Scopes the effort level needed for a full deployment,

The two offerings can be done separately or together, depending on where you are on the text analytics / Gen AI adoption path.

If this sounds like something that you would like to learn more about, please contact Tom Reamy to set up an initial call – tomr@kapsgroup.com

Getting Started

Good Beginnings Can Save $100,000’s

Text analytics is a powerful capability that can transform how organizations utilize their unstructured text. However, text analytics is not easy to do. It involves AI, taxonomies and ontologies, semantics and computational linguistics, and a bewildering number of vendors and approaches. To get the most value from text analytics, there are three critical components:

- Know what text analytics is and what it can do

- Know what is the best software fit for your organization

- A well-thought-out development and implementation strategy

To help organizations navigate this complex landscape, the KAPS Group offers a variety of services which can be stand-alone or part of an overall text analytics process that takes organizations from a quick start to a full implementation of one or more of the myriad text analytics applications.

For organizations unfamiliar with text analytics, we recommend two introductory services:

- Introduction to Text Analytics: A one hour webinar that provides an overview of the field. This webinar creates a common understanding of text analytics with a focus on what it can do for you. This webinar can be used internally to educate and promote the use of text analytics in your organization.

- Text Analytics Workshop: A three-hour webinar that dives more deeply into how to do text analytics, the business of text analytics, and the full range of applications that are possible. This workshop is customized to your organization and typically discovers a range of potential applications, both known and novel.

The KAPS Group offers two services designed to help clients select the right software. These two services are typically done together with the results of the Knowledge Audit driving the Text Analytics Evaluation service.

Text Analytics Knowledge Audit

“Self-Knowledge is the Highest Form of Knowledge” –Socrates

- This consists of two parts. The first part is an analysis of the existing information environment of an organization including content and associated metadata, taxonomies and knowledge graphs, content creation processes, and more. The second part is a series of interviews and focus groups to get a deeper understanding of existing and anticipated information needs and wants. This can be a stand-alone process to produce a strategy map or, more often, as part of a text analytics software evaluation.

Text Analytics Software Evaluation

The Right Tool Can Make the Difference Between Success and Failure

Using a well-tested methodology, we guide organizations in selecting the best fit in text analytics software. Since text analytics can be used for so many applications, the process begins with a deep requirements gathering or Knowledge Audit. We currently track about 45 text analytics companies and we map those requirements to drive a progressive filtering process to find the top 1 or 2 best fits. The last step is a short POC to ensure that the final selection works in their environment.

We also offer three “Mini” engagements that can both educate organizations and create an initial foundation for full-scale development.

- Mini-Strategy: A one week engagement that starts with the Introduction to Text Analytics followed by a series of discussions and focus groups to develop a deeper understanding of the organization’s information environment. The output is an enterprise strategy map to guide the organization in developing text analytics applications.

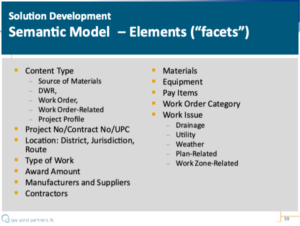

- Mini-POC: A one week engagement that develops auto-categorization rules to demonstrate the power of text analytics. We use advanced content modeling and categorization techniques to achieve 90%+ accuracy. The Mini-POC can be shared and thus create a compelling example that can engender acceptance in ways that merely describing cannot. We also offer a data extraction Mini-POC that develops a metadata model that feeds a set of data facets.

- Mini-GPT POC & Strategy: While ChatGPT, GPT-4 are making headlines around the world, there is one place where their value is more problematic – behind the enterprise firewall. This is because enterprise concepts and vocabularies are significantly different from the public text that GPTs have been trained on. The KAPS Group, in conjunction with our partners have developed a Mini-POC that demonstrates how to use the combination of text analytics and GPT to tag documents. Like out basic Mini-POC for autocategorization, this process consists of developing rules that achieve over 90% accuracy in only 1 week. Based on the outcome of the Mini-POC, we will also develop a comprehensive strategy for combining the strengths of GPT and text analytics.

Development

The KAPS Group has extensive experience in text analytics of all kinds and with most text analytics vendors. We offer development services for building a text analytics foundation as well as adding text analytics to specific applications such as search, business or customer intelligence applications, or sentiment analysis.

Auto-Categorization

Auto-Categorization is the Brains of the Outfit

The real heart (or mind) of text analytics is its auto-categorization capability where the software is used to categorize the “aboutness” of a document using either machine learning or semantic rules-based categorization. In addition to capturing the meaning of documents, this capability can also be used to add intelligence to the data extraction capability and all the applications that can be built with text analytics. Sentiment or intent analysis is a special case of auto-categorization focused on the sentiment or intent expressed in those documents.

Data Extraction / Metadata Generation

80% of Critical Business Information is Locked in Unstructured Text

The second key capability is data extraction. While AI can do some amazing things with data, over 80% of essential data is “hidden” within unstructured text. Text analytics can intelligently extract that data which then can be incorporated into multiple applications using AI or other standard data processing techniques. Typically, most vendors ship with basic sets of entities like people, location, and organizations or companies. In addition, the best software enables building rules to extract not only other kinds of entities but also the even more valuable relationships between those entities.

Content Structure Models

From Useless Chaos to Useful Structure

There is no such thing as unstructured text. All text has some structure and using text analytics tools, we can use content structure to improve both auto-categorization and data extraction. The KAPS Group has been developing content structure models (CSM) which we use to greatly improve accuracy of search and other applications. We also use CSMs to improve data extraction by focusing on the parts of documents where key data is found and ignoring the rest.

Using content structure models, we have improved recall by 25% and improved precision by over 50%. The enhanced accuracy supports applications that produce better results while saving time and money.

Sections that function as an executive summary or abstract represent the author’s human judgment of what the document is about and should be weighted more heavily than the body of the document. Sections such as Acknowledgements or an Appendix can be ignored or weighted less. Key data can be extracted from tables and other data sections and then fed into multiple applications.

Taxonomy and Ontology Development

Embodied Taxonomies and Ontologies

Text analytics almost always needs a taxonomy or ontology. Most text analytics consultants including ours have extensive experience in developing taxonomies. However, we specialize in developing embodied taxonomies, that is, taxonomies that are built on a focus on actual enterprise content, using our text analytics tools. We’ve seen too many cases where a taxonomist, using traditional methods, produces a taxonomy that is elegant but has no bearing on the actual content and so can’t be used for tagging that content.

Applications

Once documents have been categorized and key data has been extracted, the number of applications that can be built is almost endless. The highly valuable benefits range from saving time and money to generating new applications that can transform your organization.

Below are a few of the most common and most valuable.

Smarter and Safer Gen AI

Best of Both Worlds

For all their successes, Gen AI applications have several issues: hallucinations, transparency and security, generic vocabularies, and the amount of training data. Combining Gen AI with auto-categorization cures all of these issues. We can capture enterprise vocabularies with text mining, quickly generate training data, create complex Python code prompts, and process the output to fact check automatically and flag any sensitive text.

Auto-tagging and Hybrid tagging

Consistent and Transparent Tags

Asking humans to tag documents has been shown over and over again to be worse than a waste of time for both taggers and users. Text analytics auto-categorization tagging the aboutness of documents combined with data extraction to feed multiple facets is a much better answer. The best results are typically obtained with a hybrid approach: have a human review of the auto-categorization. It requires much less time and thought for taggers and produces much more accurate and consistent tags.

Search and search-based applications.

You Can’t Use It If You Can’t Find It

Keyword search returns both too many documents (happen to mention the keyword but are about something else) and too few documents (misses alternate terminology). For example, the concept of drainage can be expressed using words that refer to related terms or sub-topics of drainage such as flooding, manholes, stopped up, ditch, ponding – all terms that can be incorporated into an auto-categorization rule. In a recent project we compared search using auto-tags with no structure with auto-tagging using a content structure model.

In addition, data extraction can easily and cheaply populate multiple facets that have been shown to dramatically improve the search experience. A faceted search application we developed for the Dept. of Transportation:

Asking humans to generate this much metadata, tends to produce unhappy authors and sloppy metadata.

Social Media Analysis and Sentiment Analysis

What Are People Saying About Your Company, Your Products?

Sentiment analysis of positive and negative sentiments in social media posts is one of the most common text analytics applications. When done with dictionaries of negative and positive words it typically has low accuracy, but when supplemented with full auto-categorization functionality, can be a powerful tool in voice of the customer applications and other areas of social media analysis.

One common applications is survey analysis where the text analytics software can be used to analyze the free text survey questions. This typically involves both auto-categorization and data extraction.

Business Intelligence and Customer Intelligence

Data Tells You What, Text Tells You Why

Text analytics adds the powerful resource of unstructured text to any existing data applications. Data tells you what your customers or competitors are doing. Text tells you why. For example, it can track subscriber mood before and after a call and why that mood changed.

Text analytics can also be used for the tracking of a recurring problem categorized by customer, client, product, parts, or by representative. It can be used for fraud detection (text with lies have different patterns than truthful text). It can also be used for behavior prediction, distinguishing customers who have called to cancel from those who are really calling to bargain for something and are merely threatening to cancel.

Analytical Applications and Trend Analysis

Enriching Multiple Applications

By adding the data embedded in unstructured text, text analytics can deeply enrich existing analytical applications such as trend analysis, e-discovery, and others. The number and breath of possible applications is restricted only by your imagination. For example, once the data facets for the DOT example was developed, it was possible to develop an application that could answer questions such as:

Find the work orders and contract documents for all projects that were closed in <time range> and had at least one work order that extended the budget or schedule. Pull out the reasons for the changes, along with the type of project, district, and main contractor name.

Training Data for AI/GPT

Good AI Requires Enormous Training Sets

AI is only as good as its training data and the best way to get good training data is using text analytics. Using auto-categorization can greatly improve the quality of training data and also require an order of magnitude less human effort, at a huge cost savings. The weakest area for ChatGPT and other Large Language Models is the lack of training content from within the enterprise.